Nimrod Broshy

Nimrod Broshy is Director of Product at ASAPP, where he leads the Supervisor Suite — a set of products that gives enterprises visibility and control over AI-driven customer interactions. His work focuses on helping organizations optimize AI agents, quantify business impact, and identify high-value automation opportunities, while turning real-time customer conversations into actionable insights.

Why ASAPP

Agentic Enterprise

Generative AI for CX

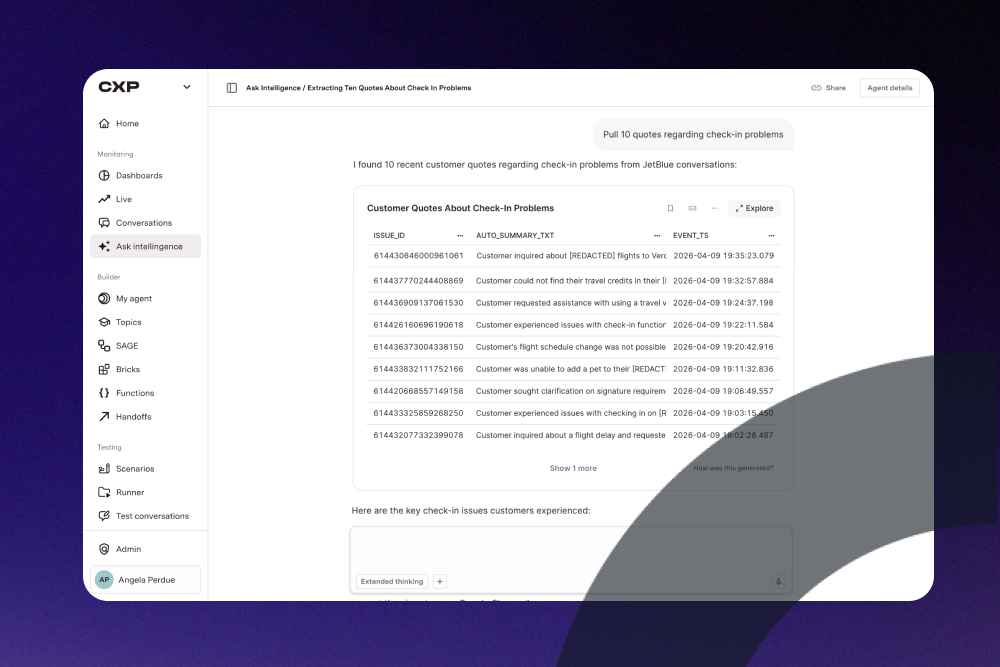

Your contact center is sitting on a goldmine: introducing Insights Agent

by

Nimrod Broshy

Article

Video

May 14

2 mins

9 minutes

Generative AI for CX

Measuring Success

Agentic Enterprise

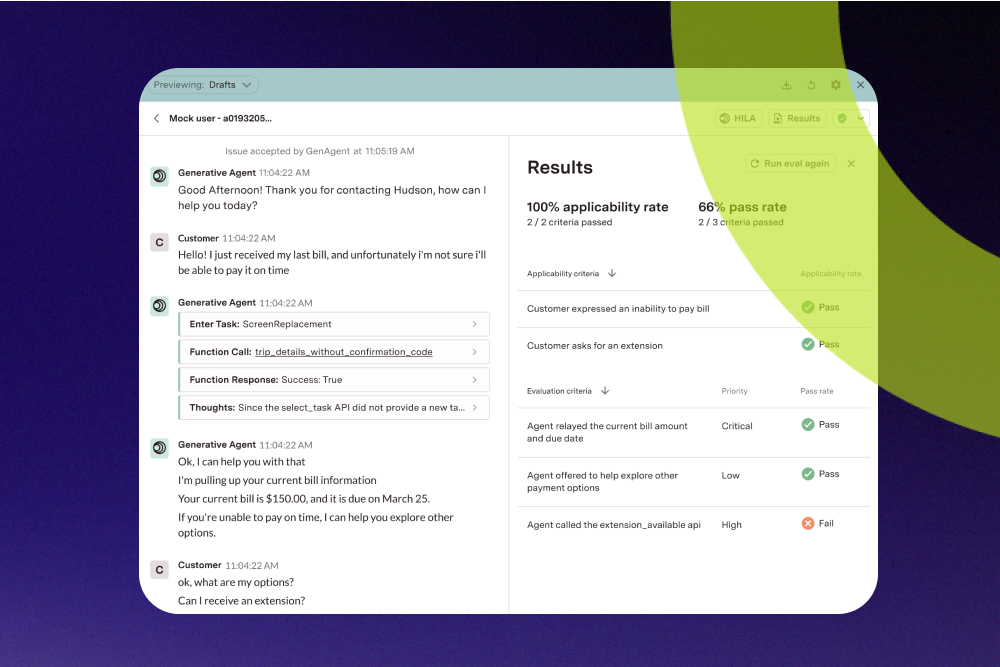

Moving beyond containment: How to truly measure the performance of your AI agent

by

Nimrod Broshy

Article

Video

Mar 24

2 mins

7 minutes

.jpg)