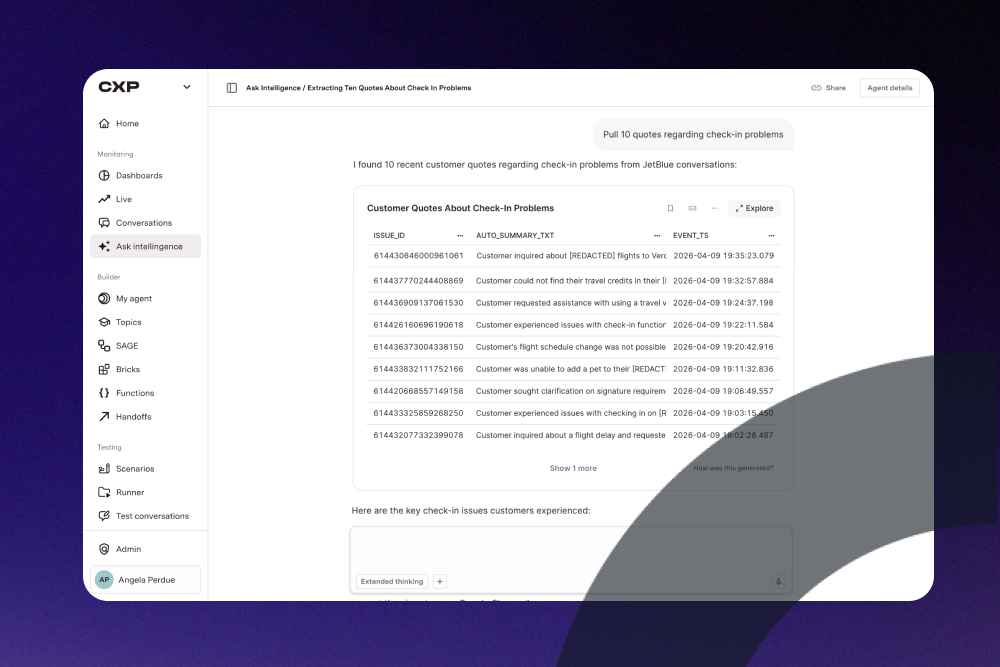

Super excited to be here. Thank you all for being here. Today's conversation is gonna be on AI in customer conversations. So AI is transforming how companies engage with customers, draw insights, and create new business outcomes. But most companies still haven't fully utilized that today to its full potential. Today, I'm fortunate to have three people on this stage who have built the infrastructure, who have deployed it at scale at Fortune five hundred companies, and who have actually led the transformation as an operator. We're gonna spend the next twenty five minutes on one core question. What does it actually take to get real value from AI in customer conversations, and why is it still so hard? My name is Andrew Tan. I'm an AI founder, investor, and I write an AI newsletter called TLDR AI. I'm lucky to be on stage with Priya, Arun, and Dylan, who I'll let introduce themselves. Cool. You want me to go first? Great. Go ahead. Hey. Great to be here. My name's Dylan. I'm the founder of a company called AssemblyAI. We create voice AI infrastructure that other companies can build on. So we have a million developers on the platform, millions of hours of voice flowing through our API infrastructure every day. This is everything from AI notetakers that you might use, like Fireflies and granola, to voice agents, to contact center operations, consumer toys, all building on our API infrastructure. Hi, everyone. My name is Arun Chandra, and I'm the chief operating officer of NICE. We provide a full suite of CXAI solutions from standalone agentic solutions to all the way to CCaaS solutions completely embedded with AI platforms and the orchestration between human agents and virtual agents end to end. Hi. My name is Priya Vijayarajindran. I'm a technologist, builder, and the CEO of ASAPP. ASAPP is a agentic enterprise platform purpose built for customer service. So most of you might already been interacting with an ASAPP customers. If you're flying, you're calling your financial services, if you're trying to understand how to get your insurance quote working, you probably are interacting with ASAPP. Pretty much excited to be here. Awesome. So there's no shortage of AI platforms and solutions on the market right now. And from a customer's perspective, it isn't about finding an AI. It's about figuring out how to adopt it, how to integrate it, how to justify the cost in terms of people, time, and now tokens. And so what are you seeing from the companies you work with? How are they navigating this, and what does it actually take to connect AI to real business outcomes? I'm happy to take a stab, if you don't mind. I think and and just to add, you know, before joining NICE, I was at Disney for the last four years, but I was actually implementing these solutions and and dealing with the challenges that we've just talked about. So I think there's a couple of things going on. One is that there are in my mind, there's sort of three kinds of customers or customers in three buckets, if you would. The first bucket is customers who are actually very keen on piloting, creating a POC on AI, if you would. Either they're self motivated or their C suite has told them that you actually have to go do something, and so they're doing that on an isolated basis. I think there's another set of customers who are actually trying to modernize their current technology stack and infuse more AI into it. And that's a very valid use case because there's so much benefit to be gained by introducing AI capabilities in, for example, in the CCaaS platform. And then there I think there's the customers who are probably the most sophisticated who are thinking about the entire journey to figure out how they wanna string this together. My belief is the reason that it's hard for some of these pilots to succeed is because they haven't looked at what the data requirements are, what the knowledge requirements are, and what the change management is required to be able to take an AI pilot and scale it across the enterprise. All the privacy considerations, legal, compliance in a large enterprise. You're a small company, you can get away with a lot of different options. But if you're scaling to a mid to large size enterprise, all of these considerations have to be taken into account to ensure that you can scale the pilot to success. Yeah. I think from our point of view, my point of view, what I see, even when I look at the agents that we're building for our own company, there's such a rapid iteration required. And I think a lot of times you'll see some AI agent or feature fail. And it's so hard to know why did that fail. Was it like the knowledge source was wrong? Did it hallucinate? Did it not have access to this thing? What is the exact reason this thing failed so that I can go fix that? And so when we look at AI deployments that go well, I think what they have is really good telemetry. They're able to iterate really quickly because you really need to be able to drive this rapid improvement. A lot of times, you'll launch an AI agent or product, and then so many things will break, so many edge cases. Yes. And you have to be able to see those and then go fix them really quickly. And it's so hard to predict upfront, like, are those edge cases gonna be? You you always find new things once you go live. And I think those visibility and rapid iteration cycles are things that make or break AI deployments from our point of view. I mean, adding up to what Dylan and Arun said, there is so much options out there. Every enterprise, when they are trying to see how do I take my real customer signals and conversations into something value adding for them, but also how can I improve my operations? They can do it themselves. They can buy from somebody else, but they're also trying to find the cost and match up to the ROI to do this. Here, the complexity, as he said, is understanding the context, the data, figuring out it, not throwing a black box effect, trying to understand why it does what it does is at ASAPP, predominantly, we focus on orchestration quite a bit because, I mean, I was just walking the floor. Every booth has the word agent. So we all can build agents. We all can bring some form of automation. We all can even try to figure out a way to give the right data. But finding when to do what, when to call what, when is it a simple task ambiguation, when it's a deep reasoning, when it's just a direct API call. That orchestration with the right context and the right data, which is tied up to the proper outcome. The outcome here is please make sure you are solving this for the customer, not just deflecting a call for the customer. Please make sure you're solving it. That's the value, and companies or enterprises gets to choose how fast can they get the value, how seamlessly can integrate to already their existing staff. That's where we we bring the difference. Arun, was there something you wanted to add to that? No. I was I think I would agree with Priya. I think the one thing that that dictates success is again, I'm I'm gonna take the lens of medium to large enterprise, not the very small organizations, new startups, and so the medium to large enterprise. Their p and l is very real. There's nothing artificial about the p and l for an airline or a bank. They're trying to make sure they meet their strategic objectives and their financial objectives, making sure that there's a true line from that set of objective that they wanna drive all the way back to what is this if I deploy AI, it's one more big massive tool in my toolkit, but I gotta figure out how it through lines into my objectives. Am I trying to reduce cost? Am I trying to improve experience? Am I trying to improve ROI return on invested capital? So I think when you approach a project from that lens, I think your chances of success are greater versus saying, hey. I'm gonna do a pilot here. Yes. You have to do some experimentation, but very soon, that experimentation has to translate into a scalable plan with all of the considerations that we talked about earlier, knowledge, data, privacy, legal, all of that has to play because that becomes extremely relevant in a large organization. Yeah. Arun, you talked on pilots. I I you know, there's a bunch of stats out there around, like, how many AI pilots make it and actually confirm from the pilot POC stage to actually make an enterprise and something like, I don't know, like ninety five percent of pilots fail. Why why do you think that happens? Is this different from what we're seeing in traditional SaaS? How do you kind of reconcile that? I'll start with this because every engagement with a large enterprise always starts with a POC or a pilot because they are trying to evaluate what impact it creates. We all know the stats from McKinsey and others. There is an interesting article which is going around, which is called the last mile execution crisis problem. I have bucketed into three categories. One is when companies bolt on AI because even in this customer conversation, it's not about automating conversations because Dylan can talk about it, especially bringing thought leadership on the voice side. It's not about understanding and automating it. It's actually about redesigning the workflow, which is bringing AI efficiency every step of the way so you can get the outcome. So companies which bolt on just automation for the sake of automating the conversation, but the real execution of the workflow is still left behind. Those are the ones who can actually feel like, oh, POC kinda worked, but I'm not able to do. Second is, you know, companies who are not enabling the workflow redesign. That's the second thing. The third one is unavailability of the data because most oftentimes, the POC is done with a tech evaluation angle or a LLM evaluation angle, but not deeply integrated with the business, like we said, the security teams and the data teams. Those are the three reasons by doing a lot more POCs that we feel like companies are not able to get the full advantage of that market. Yeah. I mean, I can think about even recently, we built a technical support agent because we're an API platform. And we have forty thousand developers a month sign up to our API to build stuff. And so these are developers at enterprise companies and also developers at university that are just prototyping stuff. And there's a lot of configurations or maybe you need code in C sharp or whatever. And so we built this technical support agent that you can chat with and it can answer questions about our API and answer questions about billing. You need a BAA, whatever. It can do a lot of stuff. The first iteration of that had like a fifteen percent, actually less, like a ten percent resolution rate. And then it would kick out to our actual support team to go help fix that thing. And then we got in there and we made a bunch of changes, and now it's up to seventy percent resolution rate. And it's so good that people are having really long conversations with it and writing in like, wow, that was such a good that was so helpful. Thank you. Now they prefer talking to that agent. They really like it. And so when I think about your question, I think about we didn't make any unlock any secret molecules to drive that change. We just really dug into like, okay, why is it failing here? Why is it failing here? Why is it failing here? And then we kind of surgically went brick by brick and fixed all those things. And I think it ends up taking just more time to get to a really good deployment than I think people realize versus the v one. And I think a lot of it's just still too easy to fail today. There's a lot of complexity to get things to work well. So it's getting easier and easier, but it's still a bit too easy to run into these edge cases. Yeah. I agree. And I would just punctuate the point that Priya made. This used to be true when you were deploying just regular software or SaaS software. And the saying was, please don't automate a bad process. Okay? The same thing applies today. Please don't put AI on top of a bad workflow. And so I think to the extent that these pilots are maybe failing is because if you don't approach it with the mindset that I'm gonna transform the way we do work, and I'm just gonna do a pilot or just drop an AI on top of something, I think that's where maybe there could be a reason why there is more failures on these pilots because you haven't thought through the changes that have to be made in people, process, surrounding technology, the fragmentation that any large enterprise, data is highly fragmented. So I think these things the way to approach this thing has to be a slightly different mindset Unless you're solving a point solution in a smaller organization, then things will work. Makes sense. When you go on Twitter and you see all of these AI demos, everything reads as doable. You you plug in a model, you automate some conversations, and then you're done. But clearly, the transformation at these bigger enterprises is not done yet. Why is it so hard, and what is the gap between this is doable and this is done? So let me take a first grab. The democratization or the commoditization of what AI brought into all of us in terms of productivity, it gives us everything as doable effect, which is kinda partially true to Things which we used to from a software engineering code has gone into days and minutes right now. But lot goes on adding up to what both of my panelists said is the assembly of things coming together, which can create that downstream metric level impact. Because if the goal is just automation, it would instantly have the doable effect. But the done effect is only when you are able to impact the real downstream. Simple example is an airline have hundred million phone calls. I'm able to automate all hundred million phone calls on. Hi, Jack. Why are you calling? How can I help you? And as a matter of fact, this was an Airbus three fifty or whatever. Half the entertainment center didn't work. Ten percent of the customers are calling. The fact that you're automating, we shouldn't be proud of. That's okay. You have solved it. Instead of a ten dollar, you probably solved it at one dollar. The fact that ten percent of the same passengers are calling, where you have the intelligence to be like, you know what? The remaining ninety, they shouldn't be calling them. Let me be proactively. That's a downstream impact that I'm talking about. So lot more aspects comes into play in terms of by connecting the data intelligence, rethinking the workflow. So for me, this is about not just automating the customer conversations. I wanna borrow the word point solution to really reimagine the customer experience. That's, for me, the difference between doable versus done. The doable effects are having a lot of I mean, you do see a lot of those I can do it type of prototypes, actually. Yeah. Yeah. I mean, I think one thing that can help a lot too is just dogfooding the stuff that you're building. So, yeah, I think it's kind of easy to vibe code something and then ship it and then be like, why isn't this working? But if you're not actually trying to use the thing yourself and really going through the experience of working with it, you like, that is very illuminating. And so I think, yeah, there's a little bit of a lack of dogfooding the agents you're building or the AI capabilities you're building within enterprises. But if that can change really quickly some of the like, oh, this part doesn't have product market fit. This thing's a bad customer experience can help drive success of the deployments. I think the only thing I would add is that I think what's done is I think everybody has recognized the power of the technology and the possibilities. I think that part is definitely done. I think the part that remains to be seen, again, with the same lens is can I scale this to a hundred and fifty geographies? Can I scale this to thirty seven languages? Can I deal with seven different kind of tax regimes that have to be dealt with if I'm collecting revenue? I think that level of complexity that has to be scaled up to be able to deploy this thing operationally, I think that's the part that remains to be done. And I think that's what requires one of the things that requires is not only the platform of the technology, it also requires a partner who's vested in your business outcomes, who understands what you're trying to solve for, and then works with you all the way along to ensure that you get to that level of, you know, outcome that you want, which is truly global scale and AI working everywhere for you versus in pockets. Yeah. Going off of that, where where do you see the gaps in getting us to that level of scale? You mentioned, finding a partner who's along for the journey. What about in terms of, like, model capabilities, existing infrastructure, harnesses? What what would we need to see to get to that level of scale? Yeah. I think a lot of it like, the raw primitives are kind of there right now. It is about, like, how are you kind of stringing this stuff together and and iterating really quickly. I mean, the crazy thing about all this technology is, like, every couple months, it's just getting so much better. Like, what AI coding agents can do today versus even a couple months ago is so wild. And that's true for, I would say, every AI technology that's being built. So I do think the raw primitives to deploy this stuff at scale is are there. It it just like you can't one shot it. You have you have to have massive, relentless kind of iteration as you're getting feedback from end users that are using this stuff. My intuition is that the technology is good enough. I don't think this is a technology challenge. And I think given the massive investment that is going on at the infrastructure level and the models, it will only get better and will definitely be at par with whatever we need. I think it becomes navigating through all of the other challenges that are required to be able to deploy it in a way that it'll create lasting value for you as a as a enterprise. And then there's a point that we haven't talked about is that there's a certain cost attached to it, and then the cost is going to as more and more of the AI companies prepare to go IPO, they're looking at their own financials. And so, therefore, the cost of tokens, the cost of deploying these harnesses, all of this is going to come to roost. And the enterprises will have to really sit back and say, okay. So I've maybe reduced cost somewhere, but now I've got additional cost to deal with. How do I create visibility around it? How do I manage it? If I buy a million tokens and I give it to my team, who's using how many? Did somebody burn through all of them overnight through an experiment that they were doing? I think there's all kinds of interesting other challenges that are involved in scaling this thing, but I think the underlying technology is good and going to get even better. I agree with this. We we are not waiting on any disruption to make it possible, but the true innovators in this space are somebody who could, anticipate the innovation happening because speech to speech models are so becoming real. The things which we are optimizing for latency and scale for handling multimillion voice conversations, we don't have to solve them anymore when speech to speech automatically gets better. So the problems we are gonna chase will be different. But the true innovation happens in anticipating some of those needs and creating the stack in such a modular and stackable way that you don't miss the speed in bringing it to your enterprises. Makes sense. Awesome. I think we are at the end of time, but I'm not sure. If not, I'm gonna squeeze in one more quick question. I'm just curious. How how are you guys using AI to make yourself more productive in unique ways, either at work or even at home? Truly, you have to be an AI native company to be able to offer an AI native platform, obviously for core productivity. But the software, the platform itself is built with a fundamental first order design principle. Agents build an agentic platform. That sums up how we do it. I mean, we built pretty advanced agents in the company that can look at customer feedback, submit PRs to GitHub based on that. Likewise. It's pretty wild. I mean, we have an internal claw that we built that has access to telemetry and analytics and can it's really, really crazy how much that's helping us as a company. And the whole company can use it and they can talk to other agents at the company. That's been something that has been a huge unlock for us internally. Same. And plus, we are using the same product that we sell to our customers. We're using it internally to improve our own internal operations all day long. I love it. I love it. Alright. Well, thank you guys so much.