The concept of the human-in-the-loop (HITL) has long been foundational in artificial intelligence. In traditional machine learning systems, humans serve as trainers, validators, and safeguards who ensure models behave as expected.

But as generative AI rapidly evolves from a back-office tool into the front door of customer experience, the meaning of human-in-the-loop is undergoing a profound shift.

Today, AI agents are no longer just assisting human agents. They’re increasingly leading customer interactions end-to-end. This change raises a critical question for CX leaders: What is the right role for humans in an AI-first service model?

The answer is more nuanced than simply inserting a human checkpoint. In the agentic era, a human in the loop should be there to make the AI smarter, ensure governance, and support the AI’s ability to scale automation without compromising the customer experience.

The shifting definitions of human-in-the-loop

The term human-in-the-loop has been interpreted in many ways over the years, often depending on the maturity of the underlying technology.

In early AI deployments, HITL typically meant placing humans directly in the execution path. For example, an AI system might generate a response, but a human agent would be required to review, edit, and approve that response before it was sent to the customer. This approach prioritized safety and accuracy, especially in regulated industries. But it came with significant trade-offs.

While effective as a control mechanism, this model introduced friction at nearly every step of the interaction. Every response became a bottleneck. Handle times increased. Agent workloads remained high. And the efficiency gains promised by automation quickly eroded.

For CX organizations under pressure to scale, this version of HITL is unsustainable.

More importantly, it ignores the current trajectory of AI. Today’s generative systems are capable of autonomously handling a wide range of interactions, safely. Treating them as tools that require constant human validation limits their effectiveness and prevents organizations from realizing their full value. Today’s most advanced agentic CX platforms are built with layered safety mechanisms, including real-time monitoring, so constant human validation is no longer necessary.

The challenge now is how to include humans in the loop so they enhance AI performance, rather than constrain it.

The most common HITL approach in CX: The escalation trap

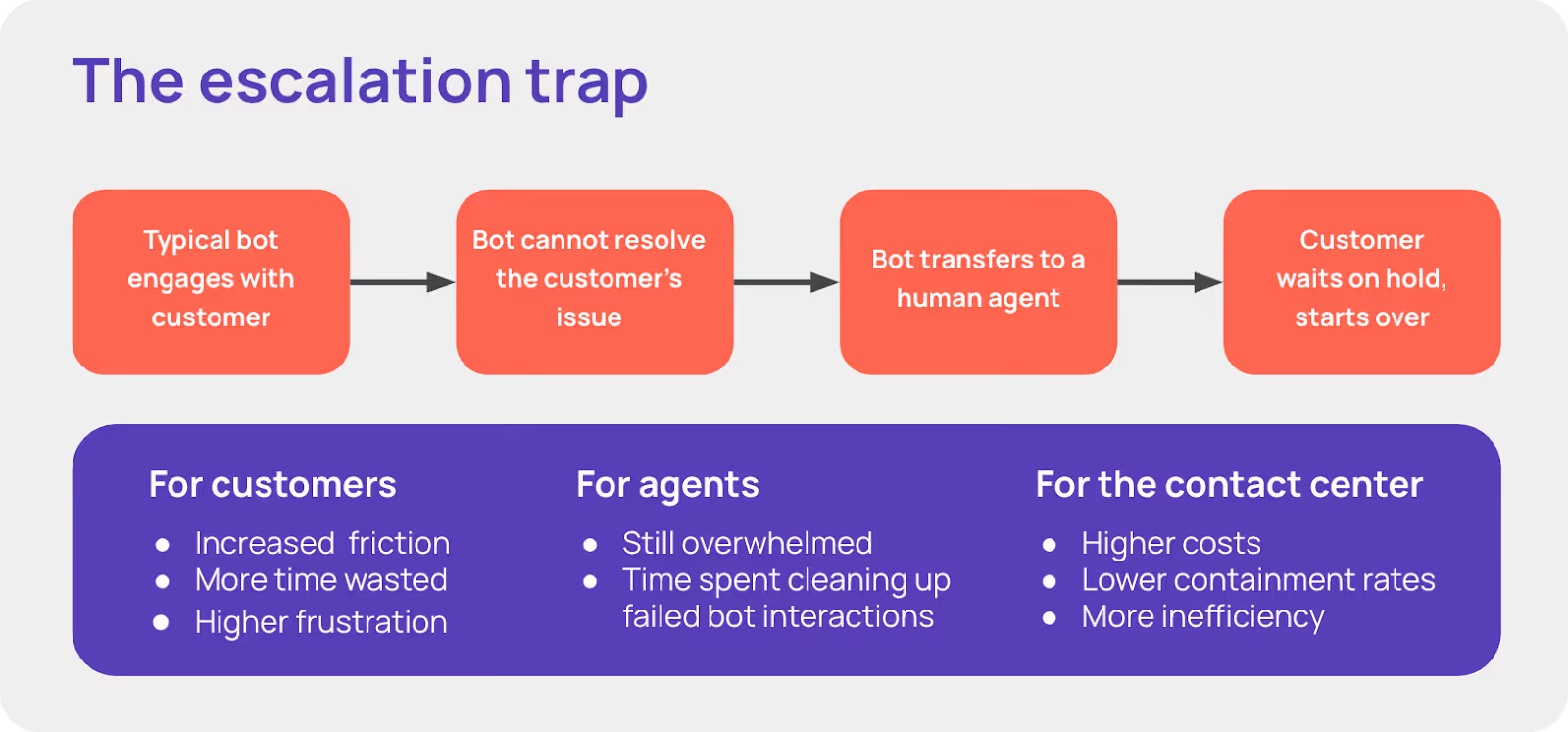

Most AI for CX vendors have moved away from mandatory human review. But they’ve replaced it with an equally problematic model: escalation-based HITL. In this paradigm, the AI agent handles interactions until it encounters something it cannot resolve. When that happens, it escalates the conversation to a human agent, effectively forcing a transfer of the entire interaction.

At first glance, this seems like a reasonable compromise. Let AI handle the easy stuff, and let humans step in for complexity. But in practice, this model creates what can be described as the escalation trap.

When an AI agent stalls or fails, the customer experience deteriorates quickly. Customers are forced to wait on hold, repeat themselves, or start all over with a human agent. These transfers introduce friction, increase resolution times, and typically lead to frustration

From an operational perspective, the impact is just as significant. Escalations drive up contact center costs, reduce containment rates, and place a heavy burden on human agents, who now spend much of their time cleaning up failed AI interactions.

In other words, escalation-based HITL doesn’t eliminate inefficiency. It creates more. For CX leaders, this creates a ceiling on automation. No matter how advanced the AI becomes, its effectiveness is limited by how often it fails and transfers work back to humans.

To automate at scale, without sacrificing the customer experience, a fundamentally different approach is required.

HILATM: One small step for ASAPP, one giant leap for human-AI collaboration

This is where HILA, ASAPP’s Human-in-the-Loop Agent approach, represents a significant leap forward in how AI and humans work together.

Rather than treating humans as either gatekeepers or escalation points, HILA positions them as collaborators, supporting the AI in real time without interrupting the customer experience.

In this model, the AI remains in control of the interaction. It engages directly with the customer, manages the flow of the conversation, and drives toward resolution. But when it encounters a gap, like missing information, ambiguous policy, or a need for authorization, it doesn’t escalate. Instead, it asks for help.

Real-time collaboration

When ASAPP’s GenerativeAgentⓇ needs assistance, it generates a targeted request for a human expert. This request is precise and contextual, such as asking for the correct policy interpretation or the appropriate next action in a complex scenario.

Crucially, the AI does not hand over the conversation. It simply lets the customer know that it needs a moment to get an answer or complete a task. When it has what it needs, it resumes the conversation.

A designed workflow

The human agent receives this request within their existing workspace, alongside a summarized context and full conversation transcript. They can quickly assess the situation, provide the necessary guidance or task completion, and confirm with the AI.

Because the request is focused and limited in scope, the human’s effort is minimal—but highly impactful. Once the AI receives the input, it immediately continues the conversation with the customer, maintaining continuity and momentum.

With AI speed and complete context for the human agent, this happens in seconds, not minutes. For the customer, that means no transfer. No waiting in the queue. No starting over with a human agent.

The ROI of HILA-enabled human-AI collaboration

By rethinking the role of humans in AI-first service, HILA unlocks a set of benefits that traditional HITL models simply cannot achieve.

Superior customer experience

Because the AI handles the conversation from start to finish, customers experience a smooth interaction. There are no abrupt transfers, no repeated explanations, and no time spent waiting on hold.

Even when human expertise is required, it happens behind the scenes, allowing the conversation to continue without interruption. The result is a faster, more consistent, and more satisfying customer experience.

Massive scale and voice concurrency

In a traditional escalation model, a human agent can handle only one interaction at a time. But with the HILA approach, human agents are no longer tied to a full conversation.

Instead, they respond to targeted requests, so they can support multiple interactions simultaneously.

This dramatically increases agent productivity and enables organizations to scale operations without a proportional increase in headcount. It also opens the door to voice concurrency, where AI can manage multiple live conversations with human support layered in as needed.

Continuous self-improving AI

One of the most powerful aspects of HILA is its ability to turn human expertise into a continuous learning loop.

When human agents provide input, they’re not just resolving a single interaction. They’re teaching the AI how to handle similar scenarios in the future. Over time, this builds a rich repository of tacit knowledge that improves the system’s accuracy, decision-making, and autonomy.

This means human-in-the-loop agents help the AI evolve and improve its own performance over time.

Increased resolution rate and ROI

By resolving complex issues in real time rather than escalating them, HILA significantly improves both containment rates and First Contact Resolution (FCR).

Fewer escalations mean a lower cost-to-serve, more efficient use of human resources, and a stronger return on AI investment.

Speed, scale, and quality when humans augment AI

Generative AI is reshaping the contact center at a fundamental level. But realizing its full potential requires moving beyond outdated notions of human-in-the-loop.

The traditional approaches—whether based on rigid approval workflows or reactive escalations—fail to capture what today’s agentic AI platforms are capable of achieving.

In the agentic era, humans should be more than an escalation point. Their new role is to augment the system with expertise, judgment, and guidance exactly where it’s needed.

ASAPP’s approach with HILA exemplifies this new paradigm. By enabling true real-time collaboration between humans and AI, it allows organizations to combine the speed and scalability of automation with the nuance and intelligence of human decision-making.

For CX leaders, the takeaway is clear: the future of customer service isn’t about choosing between AI and human agents.

It’s about designing systems where both work well together—each amplifying the strengths of the other—to deliver better outcomes for customers, agents, and the business as a whole.