Michael Griffiths

Michael Griffiths is a data scientist at ASAPP. He works to identify opportunities to improve the customer and agent experience. Prior to ASAPP, Michael spent time in advertising, ecommerce, and management consulting.

Generative AI for CX

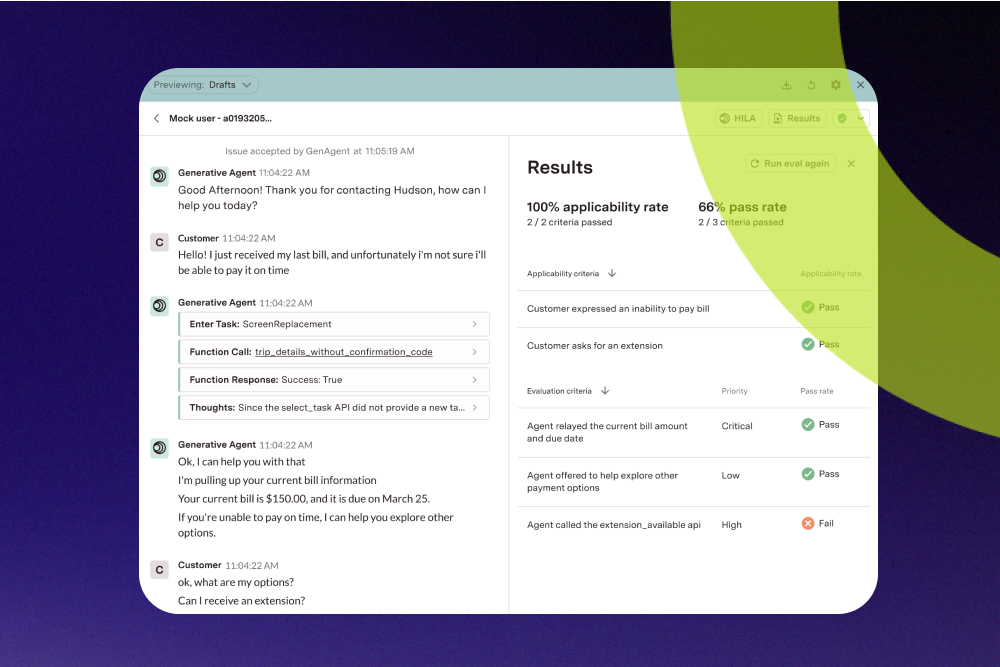

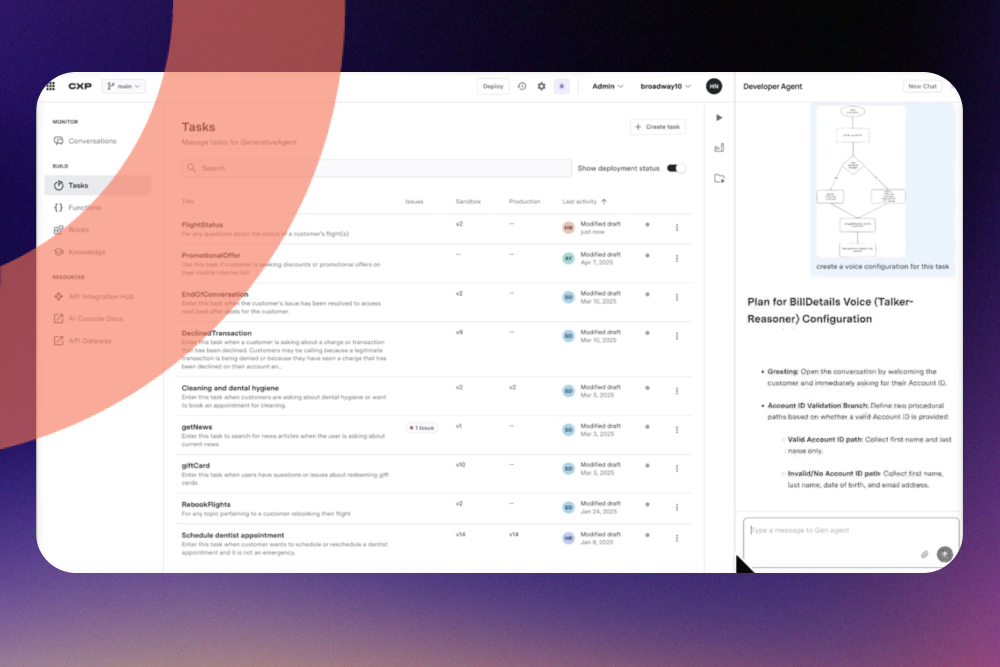

Agentic Enterprise

Getting through the last mile of generative AI agent development

by

Michael Griffiths

Article

Video

Oct 22

2 mins

7 minutes

CX & Contact Center Insights

Customer Experience

Measuring Success

Articles

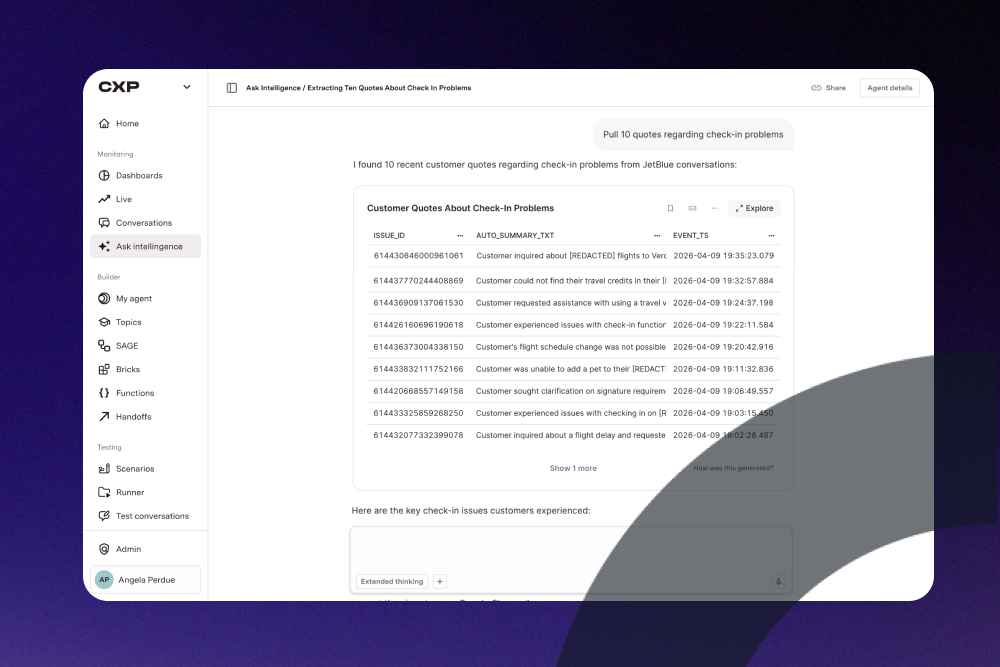

Generating New Customer Intelligence

by

Michael Griffiths

Article

Video

Apr 20

2 mins

4 min

R&D Innovations

Generative AI for CX

How to Understand Different Levels of AI Systems

by

Michael Griffiths

Article

Video

Mar 11

2 mins

AI Native®

Automation

R&D Innovations

Articles

How do you find automation workflows for your contact center?

by

Michael Griffiths

Article

Video

Sep 17

2 mins

Customer Experience

Digital Engagement

Machine Learning

Articles

The chatbot backlash

by

Michael Griffiths

Article

Video

Oct 14

2 mins

.jpg)