Key things to know:

- Most AI agent architectures ask the language model to handle both conversation and business logic, a design that works in demos but breaks in production

- ASAPP's Optimization Agent uses State-Based Flows to separate those responsibilities: the language model manages conversation, and the system controls workflow execution—required steps cannot be skipped, validation rules are always enforced, and procedures run correctly every time.

- But reliable architecture is only half the equation. The other half is learning from experience: your AI should get better at handling scenarios it's struggled with before.

- ASAPP's Optimization Agent enables your GenerativeAgent to learn from its own experience. Working with Simulation Agent and Insights Agent, it identifies where conversations go wrong, makes targeted improvements, and verifies they work, so your AI doesn't make the same mistake twice.

- The result: AI automation that doesn't just execute reliably on day one, but gets measurably better over time.

- Optimization Agent is one of five new agents in the ASAPP CXP—alongside Discovery, Developer, Simulation, and Insights—each serving a different layer of your CX operation, tied together by Orchestration at the core.

Every enterprise deploying AI to automate customer service faces two uncomfortable questions: How do you trust an AI to follow your company's policies every time? And once you trust it, how does it learn from what goes wrong?

The honest answer, for most architectures today, is: you can't do either. Not reliably. Not at scale.

Large language models are extraordinary at understanding natural language, extracting meaning from messy human conversation, and generating fluent responses. But they are probabilistic systems. Ask a language model to follow a 47-step refund policy while simultaneously managing authentication, API calls, data validation, and natural conversation, and it will get it right most of the time. But "most of the time" is not what regulated industries need. "Most of the time" is not what your compliance team signs off on.

And even when it works, how does it learn? In a typical monolithic prompt, every instruction interacts with every other instruction. When a conversation goes wrong, there's no way to trace the failure to a specific part of the system, fix it, and know you haven't broken something else. Your best engineers spend hours reading conversation transcripts, adjusting sentences in a prompt, hoping the change helps more than it hurts. The AI makes the same mistakes again and again, because nothing in the architecture lets it learn from experience.

The challenge with putting the language model in charge

The prevailing approach to building AI agents is straightforward: give the model a large prompt containing all the business logic, hand it a set of API tools, and let it figure out the right sequence of actions. This works well for demos and simple use cases. At production scale, it breaks down in specific, predictable ways.

The model skips steps. A policy says, "Always verify account ownership before processing a refund." The model does this 95% of the time. The other 5%, it proceeds directly to the refund. These failures are intermittent and nearly impossible to reproduce in testing. They emerge only at scale, in production, with real customers.

Changing one instruction breaks something else. In a monolithic prompt, every instruction coexists in a single context. Rewording a refund policy can subtly change how the model handles authentication, not because the topics are related, but because the model's interpretation of the combined prompt shifts. Every change risks unintended side effects.

And you can't prove it works before you deploy it. The conversation logic exists only as prose in a prompt. It can't be visualized as a workflow, inspected by compliance, or statically analyzed for errors. You find out it's broken when a customer encounters the bug.

Worst of all: you can't systematically optimize what you can't isolate. If your entire business logic lives in one prompt, there's no way to identify which part is underperforming, change it independently, and measure the result. Optimization requires structure. Monolithic prompts don't have it.

Some platforms have responded by adding "workflow" features, typically visual builders that sequence prompt templates. These help organize the problem, but they don't fundamentally change who is doing the heavy lifting. The language model is still constructing API calls, still bearing the cognitive burden of sequencing business logic. The workflow layer coordinates which prompt the model receives. It doesn't remove execution responsibility from the model. And it doesn't give you the levers you need to optimize.

These aren't hypothetical risks. They are the daily reality of operating AI automation at enterprise scale.

What's actually changing

As enterprises scale AI-driven operations, procedures become more complex, compliance requirements increase, and the cost of mistakes grows. The right response isn't to prompt-engineer your way to reliability. It's time to rethink which parts of the system should be doing what—and then build the machinery to make the system improve itself.

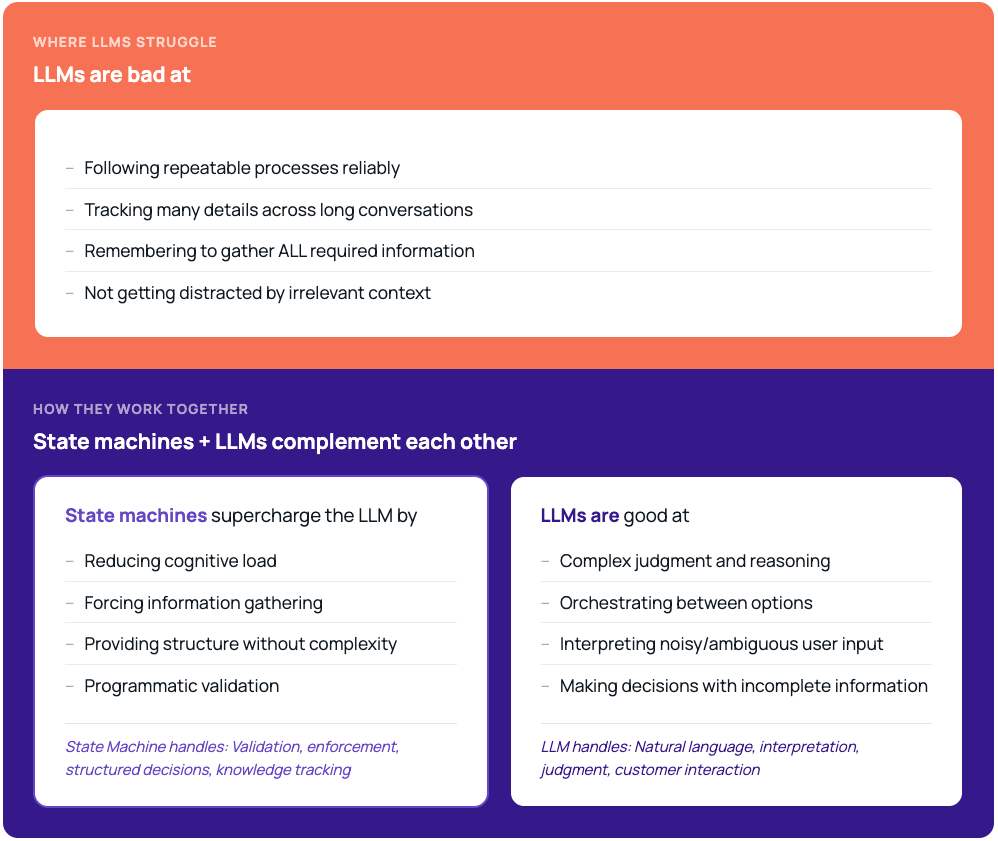

Language models are good at conversation. They understand ambiguous requests, handle topic changes, respond with empathy, and adapt to the customer's tone. They are not, by design, good at guaranteed sequential execution, deterministic API management, or enforcing multi-step business logic without deviation.

A more durable architecture recognizes this: let each component do what it does best. The language model talks. The system enforces. And when something goes wrong, the system learns from it—so the same mistake doesn't happen again.

Why existing approaches fall short

The gap isn't awareness. Most CX leaders and technology teams understand that LLM-based agents aren't perfectly consistent. The gap is architectural, both for execution and for improvement.

When business logic lives inside a prompt, it's impossible to enforce it structurally. You can add more instructions, more guardrails, more examples. But you can't prevent a probabilistic system from probabilistically skipping a step. You can't guarantee, without ambiguity, that your validation logic will run in every conversation.

And when something does go wrong, you're left searching through a 300-line prompt for the sentence that caused the problem. Did the model skip the verification step because of how you worded the refund instructions two paragraphs earlier? You'll never know. There's no isolation between concerns.

This lack of structure doesn't just hurt reliability. It makes optimization nearly impossible. You can't A/B test a single step in a monolithic prompt. You can't measure whether your authentication flow improved without also measuring whether your refund flow regressed. You can't run an optimization loop against something that has no independent moving parts.

The teams responsible for compliance need proof that a workflow executed correctly. The teams responsible for performance need the ability to improve specific behaviors without risking everything else. Most architectures today can't provide either.

Introducing Optimization Agent

Last November, we introduced the ASAPP Customer Experience Platform (CXP), designed to orchestrate the best path to resolution across every interaction. This spring, we're introducing its next evolution: a coordinated, multi-agent system with five new agents—Discovery Agent, Developer Agent, Simulation Agent, Optimization Agent, and Insights Agent—each serving a different layer of your CX operation, tied together by Orchestration at the core.

Optimization Agent sits at a critical point in that system. Reliable architecture is the starting line, not the finish line. The real question is whether your AI learns from the conversations that don't go well.

State-Based Flows: The architecture that makes optimization possible

The foundation of Optimization Agent is State-Based Flows: an execution control architecture that separates conversation from business logic, enforcing workflow rules, validating required steps, and making sure procedures execute correctly every time.

The core design principle is straightforward. Workflows are structured as graphs of discrete states. At each state, the language model does what it's good at: understanding the customer and collecting the required information through natural conversation. Once the model submits that data, the system takes over — running validation, calling APIs, evaluating conditions, and advancing to the next state. The model never constructs an API call. It never decides the sequence of operations. It can't skip a step, because the system controls the sequence.

What this means in practice:

- Required steps are never skipped. Policy enforcement is structural, not instructional. The system will not advance past a verification step until the required data is collected and validated, every time, for every customer.

- API integrations are protected. All API interactions are encapsulated inside typed, sandboxed functions that the system executes automatically. The language model learns about outcomes through curated messages, not raw API responses.

- Changes don't create unintended side effects. Each state is self-contained with its own instructions, data requirements, and validation logic. Editing one step has zero effect on other steps, because their instructions never coexist in the same context.

- Workflows are validated before deployment. Configurations are compiled through a multi-stage pipeline that proves data flows correctly across the entire workflow graph, across every possible path a conversation might take. A misconfigured workflow cannot be deployed. Structural errors surface at build time, not in production.

But here's what makes State-Based Flows truly different from other workflow architectures: they create the preconditions for learning from experience. Because each state is isolated, you can measure its performance independently. Because transitions are deterministic, you can trace exactly where a conversation went wrong. Because configurations are structured data (not prose), improvements can be made to specific steps without risking the rest of the workflow. The architecture doesn't just make your AI reliable; it makes your AI capable of getting better.

Auto-Optimization: GenerativeAgent learns from experience

Today, when GenerativeAgent behavior varies from expectation—misunderstands a customer request, collects the wrong data, fails to resolve an issue—that failure is flagged, likely reviewed by an engineer, and hopefully fixed manually. The AI has no mechanism to learn from its own mistakes. It will make the same error the next time it encounters a similar scenario.

Optimization Agent changes this. It works together with two other agents in the CXP to close the learning loop:

- Simulation Agent generates realistic conversation scenarios that test your Generative Agent across the full range of customer intents, edge cases, and difficult situations, including the ones that have caused problems before.

- Insights Agent analyzes the results, identifying exactly where conversations succeed and where they break down, not just "this conversation failed," but "this specific step in this specific workflow is where the failure occurred, and here's why."

- Optimization Agent takes those insights and makes targeted improvements to the specific workflow steps that need attention, then verifies the changes actually work by running the same scenarios again.

The result is a learning cycle: your Generative Agent encounters a difficult scenario, the system identifies what went wrong and where, improvements are made to the specific steps involved, and the fix is verified. The next time a similar scenario comes up, the AI handles it correctly. It learned from experience.

Because State-Based Flows decompose your workflow into independent, measurable states, this learning is precise. The Optimization Agent doesn't make sweeping changes and hope for the best; it identifies exactly which step underperformed and improves just that step, without risking the rest of the workflow. This is impossible with monolithic prompts, where every change affects everything.

Think of it this way: State-Based Flows give you the control surfaces. Optimization Agent, Simulation Agent, and Insights Agent work together to turn the dials.

How it works

In practice, consider a customer calling to update a Known Traveler Number (KTN) on their reservation. Here's what happens with State-Based Flows and Optimization Agent:

The language model greets the customer and understands the request. It's given a focused set of instructions and a small number of fields to collect: name, confirmation code, traveler number. It gathers these through natural conversation, asking follow-up questions, handling corrections, and being patient.

Once the model submits the collected data, the system runs automatically: it validates the traveler number format, calls the reservation API, matches the customer to the correct passenger, calls the update API, records the result. The model never sees these API endpoints. It never decides the order of operations. It receives a curated summary ("KTN updated successfully for this passenger") and communicates the result naturally to the customer.

The model participated in the conversational parts. The system handled the execution. The customer received reliable, consistent resolution.

Now, imagine that 8% of KTN conversations fail at the passenger-matching step—the customer provides a name that doesn't quite match the reservation record. Simulation Agent replays these scenarios. Insights Agent pinpoints the failure: the name-collection step isn't asking for the name in the right format. Optimization Agent updates just the name-collection state to ask for the name exactly as it appears on the boarding pass, then re-runs the same scenarios to verify. Match rate improves. No other step in the workflow was touched or affected.

The GenerativeAgent learned from those failed conversations. The next customer who calls with a similar name formatting issue gets a better experience because the system already encountered this problem and fixed it.

Multiple workflows can run simultaneously. If a customer pivots mid-interaction, asking about baggage policy while a reservation update is in progress, the model manages both conversations contextually while the system independently tracks the state of each workflow. When one completes, the other is right where the customer left it.

From probabilistic to provable, from static to continuously improving

For CX leaders, Optimization Agent changes the conversation:

For operations teams managing complex service procedures, this means an AI that gets better at the scenarios that matter most: the difficult edge cases, the unusual requests, the situations that used to require escalation to a human agent.

For technology leaders accountable for governance, it means an audit trail grounded in structure. Every conversational moment is tied to a specific point in the workflow graph. Every improvement is a targeted, verifiable change to a specific state. When something goes wrong, you see which state failed and why, and you can see that the system has already learned from similar failures in the past.

Where this matters most

Regulated service environments. When a required verification step has compliance implications, you need structural enforcement, not probabilistic confidence. Optimization Agent’s State-Based Flows encode compliance requirements as workflow structure, not as prompt instructions the model might follow. And Optimization Agent ensures those workflows improve within the compliance boundaries you define.

High-complexity procedures. As workflows grow to cover more intents and more API integrations, monolithic architectures degrade. With Optimization Agent’s State-Based Flows, the language model always sees the same small set of tools, regardless of how many workflows or APIs are configured in the system. Complexity lives in the engine, not in the model's context. And Optimization Agent manages that complexity automatically, identifying and improving the specific states that need attention, no matter how large the workflow graph grows.

Teams scaling automation without scaling risk. The enterprises we work with, including American Airlines, DISH, and Astound Broadband, aren't just trying to automate more conversations. They're trying to maintain control as automation expands, and ensure their AI learns and improves as it encounters new situations. Optimization Agent is designed for exactly that: reliable execution that gets better from experience, at enterprise scale.

What this means for how you think about CX automation

The conversation in enterprise CX has shifted from "Can AI handle this?" to "Can AI handle this reliably, learn from what goes wrong, and get better over time?"

That's a harder question. And it requires a different architectural answer.

State-Based Flows make your system trustworthy by removing execution responsibility from a probabilistic component and placing it with a deterministic one. Optimization Agent, working with Simulation and Insights Agents, makes GenerativeAgent capable of learning from its own experience: identifying what went wrong, fixing the specific steps involved, and verifying the improvement.

The model handles conversation. The system handles execution. And when something doesn't go right, the system learns from it so it doesn't happen again.

Your team deserves AI automation that doesn't just perform, but learns. From every conversation.

Explore how Optimization Agent can help your team build AI automation that learns from experience. Talk to an AI CX specialist →