Adopting new AI tools without redesigning how work gets done doesn't transform your service model; it just makes the same problems compound—faster—and grow harder to unwind.

Adding AI to an existing customer service operations workflow is like installing a faster engine in the same car. The rattles are still there. The steering still pulls left. You just find out sooner.

That distinction matters more than most leaders realize. Right now, customer service teams across industries are rushing toward AI customer service implementation: new copilots, automation layers, intelligent routing, and generative response apps. The investment is real, the vendor promises are compelling, and the pressure to modernize is legitimate. But for too many organizations, the result is the same: metrics that look better on a dashboard while the structural problems that matter to customers go unsolved.

The issue is not the technology. The issue is that technology alone does not transform how organizations operate. McKinsey's State of AI 2025 puts a number on it: AI high performers are 2.8x more likely to have fundamentally redesigned workflows than their peers (55% versus 20%). The differentiator is not the tools. It is the willingness to redesign the work around them.

The consequences are significant. Without work redesign, organizations hit the ceiling of their AI investment quickly. Handle time improves and containment rates tick up, but those gains plateau while the technology and labor costs keep compounding.

At the same time, the underlying problems do not just persist. They accelerate. More interactions processed faster through the same broken workflows produce more inconsistent outcomes at a greater scale. The tools get faster. The problems get bigger. And the ROI case that justified the investment quietly erodes.

AI tools don’t transform organizations. Design decisions do.

Look closely at most AI deployments in customer service operations, and you will find a familiar pattern: automation layered onto existing processes, legacy workflows left largely unchanged, performance improvements that live at the edges without touching the structure beneath.

A contact center adds an AI assistant for customer service agents. Handle time improves. But the escalation logic is the same. The quality assurance process is the same. The way supervisors coach, and what they coach for, is the same. The organization has new technology, a larger technology budget, and the same operating model. Customers experience the same friction, eroding satisfaction and trust. The contact center absorbs the cost, keeping its perception firmly anchored as “cost center” instead of “growth driver.”

This is not a failure of the tools. It is a failure of scope.

The organizations that see lasting change are not the ones that buy the best AI in customer service. They are the ones that use AI as a forcing function to ask harder questions:

- Who owns which decisions?

- How should work actually flow?

- What does accountability look like when a machine is handling the first three steps of every interaction?

Those are design questions. And they require design answers.

Real change starts with work design.

Operating models do not change when new customer service solutions are deployed. They change when responsibilities change.

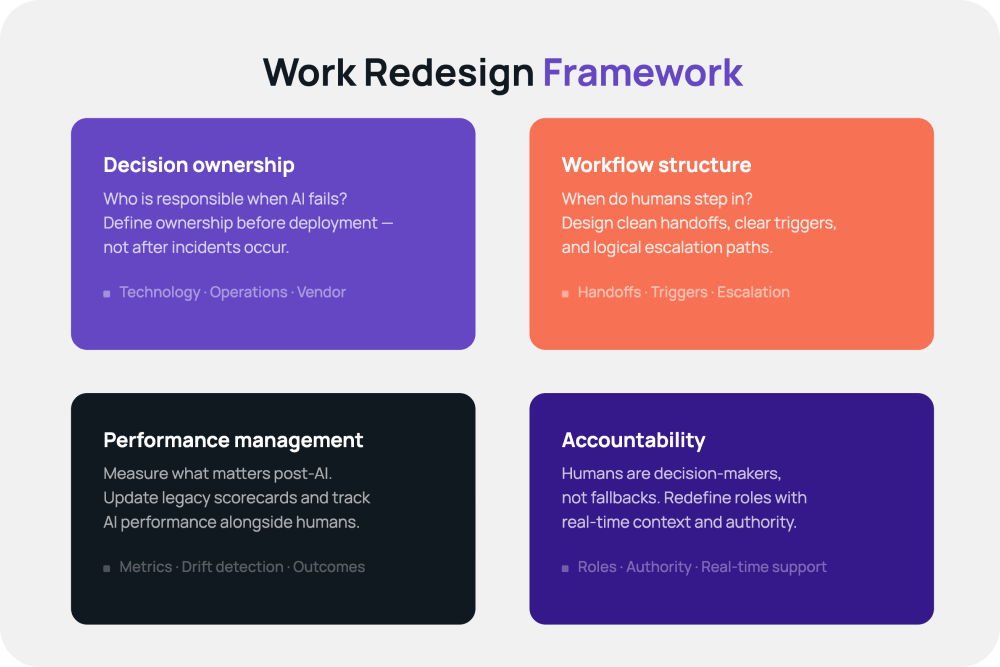

That means you have to go further than procurement and implementation of AI in customer service. Sustainable transformation requires deliberate redesign across four dimensions:

Decision ownership.

When AI answers customer queries or handles a task, who is responsible for the outcome? If an automated system misroutes a customer, who owns that failure: the technology team, the operations team, or the vendor? These questions need answers before deployment, not after incidents.

Workflow structure.

Generative AI can handle a remarkable range of customer queries and interactions, but that range is exactly what raises the stakes for how work is designed around it. When a system can respond to almost anything, the critical questions become human ones:

- When do human agents step in?

- Who owns the customer relationship when the AI is uncertain?

- What happens to trust when the system is confident but wrong?

Designing clean handoffs, clear triggers, and logical escalation paths is foundational work that has to happen in parallel with, or before, any technology investment.

Performance management.

What you measure shapes what your teams optimize for. When AI changes what agents do, shifting them toward higher-complexity interactions, oversight responsibilities, or exception handling, the metrics used to evaluate them have to change too. Organizations that keep legacy scorecards in place after deploying AI in customer service end up measuring the wrong things.

But performance management doesn't stop with the humans left in the loop. The AI itself is a performer, and it needs to be managed as one. That means tracking where it succeeds, where it fails, and where it produces confident-sounding answers that turn out to be wrong.

Without a discipline for monitoring AI performance over time, organizations lose the ability to distinguish between "the process is broken" and "the model has drifted." Both are real. Both require different responses. Neither gets caught without measurement.

Accountability.

As automation takes on more of the customer interaction, the humans remaining in the loop carry higher stakes. Their roles need to be defined with that in mind: not as fallbacks, but as decision-makers in a redesigned system. That redefined role demands new support. An agent handling only escalations and exceptions needs real-time AI assistance, full interaction context, and guidance at the moment of decision, not more dashboards to check after the fact.

None of this is as straightforward as deploying software. But it is the work that actually changes outcomes.

The risk of partial transformation with customer service AI

Organizations that implement customer service AI tools without redesigning work pay a price for it. The costs are real: wasted investment, compounding operational debt, degrading customer satisfaction, and a widening gap between what the AI in customer service could deliver and what the organization actually captures.

The symptoms are recognizable. Customer outcomes become inconsistent: AI handles some scenarios well, humans handle others differently, and there is no coherent logic governing which is which. Processes fragment as new AI tools are integrated without updating the workflows around them. Accountability becomes unclear as customer experience teams debate whether a problem is a technology issue or an operations issue.

Partial transformation is often harder to manage than no transformation at all. It combines the disruption of change with the drag of legacy infrastructure, and it tends to surface problems in front of customers before it surfaces them inside the organization.

The window to catch these issues is before deployment, not during.

AI customer service implementation: What redesigning work actually looks like

Practical work redesign is not abstract. It is a set of specific, sequenced decisions:

- Define decision points. Map every place in a customer interaction where a choice is made, by a human or a system. Make those points explicit. For each one, determine who or what should own it, and under what conditions it escalates.

- Standardize workflows. AI works best in structured environments. Before deploying AI in customer service, document and standardize the workflows it will operate in. Identify where variation is legitimate and where it is just inconsistency.

- Establish governance. Decide how AI performance will be monitored, who has authority to modify system behavior, and how changes will be communicated to the teams working alongside the technology.

- Measure outcomes. Define what success looks like, not just for efficiency metrics, but for customer outcomes and employee experience. Build the measurement infrastructure before launch so you have a baseline and a mechanism to course-correct.

This kind of structured design work is less exciting than the technology itself. It does not make for compelling demos. But it is what separates organizations that transform from organizations that modernize without changing.

Transforming customer experience is a design discipline

If you are reading this, you’re probably not at the starting line. You might have already made the investment. AI is already in the workflow. The question is no longer whether to adopt; it is whether the work around it has been designed to make that investment pay off.

If it hasn’t, the trajectory is predictable. Early gains plateau as structural problems reassert themselves. Costs compound. And the organization is left holding an expensive technology investment that moved the metrics but didn’t move the business.

The organizations closing that gap are not buying better tools. They are doing harder work: defining who owns decisions, standardizing how work flows, managing AI as a performer, and equipping the humans left in the loop to act with the full context and authority the role now demands.

That is not a technology project. It is a design discipline. And it is the only path from AI adoption to AI transformation.

The question is not whether your organization has adopted AI. It is whether the work has been redesigned to make it matter.